I just got back from Norway. I attended an informative (and my maiden one for IGeLU – International Group for Ex Libris Users) conference organized by Ex Libris. It took place at the Clarion Hotel and Congress in Trondheim.

By the way, it was my first trip to Norway. Word of caution: If you are catching a connecting internal flight from an international flight, give yourself at least 2.5 to 3 hours or so. When I arrived at Oslo, there was a very long line at the Immigration. I pleaded with the immigration officers and they allowed me to go to another line (which was way much shorter). However, I noted that I had less than 40 mins to – 1). get my luggage at the baggage belt 2). get onto to the departure hall 3). check-in my luggage 4). go through security check and make a mad dash (do a Usain Bolt dash) to the gate. Thank God, somehow i made it “thru the rain”.

Back to the main story: IGeLU provides a platform for Ex Libris and also Proquest users to network. There are many interesting sessions and meetings during this event. Participants had the opportunity to bring up issues, get product updates and related matters. I was a newbie to this conference and meetings. But I learnt quite a lot when I was there. Lot of focus on Alma, Rosetta and Primo (being Ex Libris products).

Some of the conference highlights (for me) were:

- meeting up with the Summon Product Working Group (Summon PWG which I am a member) esp Daniel Forsman, Library Director, Chalmers University of Technology

- plenary session given by Matt J Borg, Senior Librarian & Solution Expert, Ex Libris and Deputy Chair, UXLibs Committee – “A matter of perspective. User Experience in Libraries and You”.

- Presentation of the Azriel Morag Award for Innovation

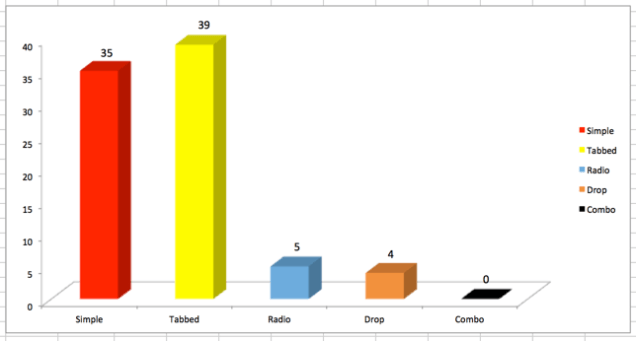

- Summon Product Update by Brent Cook who is the Director of Product Management, Discovery and Delivery, Ex Libris Summon PWG

- 360 Product Updates

- break-out session – “Standing on the shoulders of giants” – Establishing Innovation at Lancaster University Library given by Masud Khokar, Head of Digital Innovation, Lancaster University

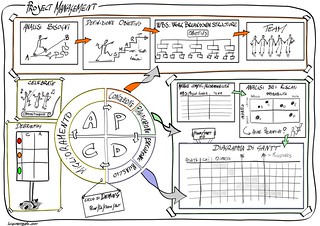

One of the things that stood out was the topic of usability, which is close to my heart. Matt used various examples such as the switch of left-hand driving to right-hand driving in Sweden (1967) – with regards to user experience. He elaborated on the impacts of such initiative when user perspectives are not taken into full account. Just as it is with usability studies in libraries, it’s always important to note:

- behavioral patterns of our users when using the library; and this is not limited to just websites

- techniques deployed when eliciting information on users’ behavior

among others. Usability testing can be a simple information gathering process involving some library users and asking them simple and straight-forward questions to complex testings involving “follow the user” behavior method from the moment they step inside the library. It boils down to how much resources that the library has and how the library can maximize those resources.

Another session that I found particularly noteworthy was Lancaster University Library’s approach and practices to innovation. According to Masud, the library has taken 4 ways of developing internal innovation. They are:

- Forced innovation

- Exploratory innovation

- Randomized innovation

- Empowered innovation

(source: Conference notes)

Participants were showed the various stuff that the library did such as:

- “Jolt the Library“

- Smart Cushions

- Adjustable Desks

- Noise Canceling Headphones

- Visual Maps in Primo

- Charger cables

among others. (Source: Conference Notes).

Apart from that, I attended several meetings on Summon and 360 products. I had the chance to meet up with the Support Team Lead and the Director of Support for EMEA region. During that meetings, I aired our library’s issues concerning Summon and 360 products.

During the downtime, I had the chance to visit some of Trondheim’s places of interest namely: Nidaros, Old Town Bridge, Historic Wharves, Bakklandet. Noted that most of the people in Trondheim cycled a lot, jog and walk around town. Taxi rides are expensive. I took a 3 min ride and it costs around 20 SGD.

One of the things that impressed me was an incident at Trondheim airport. An elderly lady apparently placed her passport in her check-in luggage by mistake. The desk airport staff managed to resolve the issue in less than an hour; contacting the baggage airport staff to isolate the bag and allowed the passenger to retrieve her passport. Talk about efficiency 🙂

Here are some photos of my trip: